Trump Administration Faces Push for Mandatory AI Safety Reviews Before Government Contracts

In a move that could fundamentally reshape how artificial intelligence companies do business with the federal government, a nonprofit organization is making waves with a bold proposal that puts safety front and center. Americans for Responsible Innovation, a group dedicated to shaping smart AI policy, has stepped into the spotlight with a recommendation that’s as straightforward as it is potentially transformative: if you want to work with Uncle Sam, your AI needs to prove it’s safe first.

The proposal, unveiled in mid-May 2026, isn’t trying to regulate every chatbot or recommendation algorithm out there. Instead, it zeroes in on what industry insiders call “frontier” models—the heavyweight champions of the AI world. These are the most advanced, most capable, and yes, potentially most dangerous artificial intelligence systems being developed today. Think of the difference between a pocket calculator and a supercomputer, and you’ll get a sense of the gap between everyday AI tools and these frontier systems. ARI’s argument is simple but powerful: before these technological titans get anywhere near sensitive government operations, someone needs to kick the tires and make sure they won’t veer off the road.

The Problem with a Blank Check for AI

What sparked this recommendation wasn’t just theoretical concern about what might go wrong someday. ARI had already raised red flags about a very real, very current problem lurking in the fine print of government AI contracts. Back in early April 2026, the organization sounded the alarm with the General Services Administration—the agency that essentially acts as the government’s landlord, procurement officer, and property manager rolled into one. The issue? Language buried in existing regulations that allows AI systems to be used for “any lawful purpose.”

On the surface, that might sound reasonable enough. After all, why would the government want to limit itself to using tools it’s already paid for? But ARI saw something more troubling: a loophole big enough to drive a semi-truck through. These “any lawful use” clauses essentially hand AI systems a blank check once they’re inside government networks. There’s no meaningful oversight, no specific boundaries, and no real accountability for how these powerful tools get deployed after the contract is signed. It’s like buying a Swiss Army knife but then using it to perform surgery—technically possible, perhaps even lawful, but definitely not what anyone had in mind when they made the purchase.

When Technology Moves Faster Than Government Can Keep Up

If you’re wondering whether this is all just regulatory overreach or paranoid hand-wringing, consider the numbers ARI is working with. Since 2010, the computational power devoted to AI has been growing at a rate of 4.2 times per year. Let that sink in for a moment. We’re not talking about steady, predictable growth like you might see in traditional industries. This is exponential expansion that would make even Silicon Valley’s most optimistic venture capitalists do a double-take.

To put it in perspective, imagine if your car got 4.2 times faster every single year. In just five years, your morning commute vehicle would be breaking the sound barrier. That’s the kind of trajectory we’re looking at with AI computation power, and it creates a real problem: the technology is evolving far faster than the government’s ability to understand it, evaluate it, or regulate it effectively. By the time policymakers wrap their heads around what today’s AI can do, tomorrow’s systems have already leaped several generations ahead. ARI’s proposal is essentially an attempt to build a checkpoint into this runaway train—a mandatory pause that forces everyone to assess what they’ve built before unleashing it into the wild world of government operations.

And it’s not just policy wonks and tech experts who are nervous about this gap. According to ARI’s data, a whopping 82% of the American public doesn’t trust tech executives to regulate AI on their own. That’s an overwhelming supermajority that crosses every political, demographic, and geographic boundary you can imagine. When more than four out of five Americans agree on anything in today’s polarized climate, that’s worth paying attention to. It suggests a deep-seated public unease with the “trust us, we’ve got this” approach that has characterized much of the tech industry’s relationship with regulation up to this point.

What This Means for AI Companies and Government Contractors

If ARI’s recommendation becomes reality—and that’s still a significant “if”—the landscape for AI companies doing business with the government would change dramatically. The most immediate casualties would be felt in the bottom lines and timelines of firms that currently derive substantial revenue from federal contracts. Mandatory safety reviews don’t come cheap, and they certainly don’t come quick.

First, there’s the cost factor. Comprehensive safety auditing for frontier AI models isn’t something you can knock out over a long weekend. It requires specialized expertise, extensive testing across numerous scenarios, documentation that would make a PhD dissertation look like a grocery list, and likely third-party verification to ensure nobody’s grading their own homework. For large, established AI companies with deep pockets and existing safety teams, this might be manageable, if annoying. It’s another line item in the budget, another box to check before the contract gets signed.

But for smaller startups and emerging players in the AI space, this could be a deal-breaker. These companies often operate on razor-thin margins, moving fast and iterating quickly because they have to—they’re racing against both the clock and much larger competitors. Adding extensive safety auditing requirements could price them out of the federal market entirely. It’s the classic regulatory paradox: rules designed to make everyone safer can also create barriers that favor established players and make it harder for innovative newcomers to compete. The question becomes whether the safety benefits outweigh the potential loss of innovation and competition, and reasonable people will disagree on where that balance should fall.

The Crypto Connection and Broader Regulatory Momentum

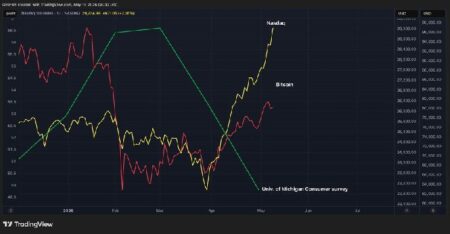

Interestingly, this isn’t happening in isolation. The regulatory landscape around AI is heating up from multiple directions, and some of those directions connect to worlds that might seem unrelated at first glance. In late March 2026, the Commodity Futures Trading Commission—better known as the CFTC—announced the creation of a task force specifically focused on how AI intersects with digital assets and cryptocurrency markets.

Now, ARI hasn’t explicitly drawn connections between its government procurement recommendations and the cryptocurrency world. The nonprofit is relatively new to the AI regulation scene and seems to be staying focused on its lane. But the timing is interesting, and the implications are worth considering. The CFTC’s move signals that regulators across different domains are starting to think seriously about AI governance and how these powerful systems might interact with other emerging technologies like blockchain.

For anyone invested in AI-related tokens, projects at the intersection of artificial intelligence and cryptocurrency, or even just the broader tech sector, this convergence of regulatory attention matters. If mandatory safety reviews gain real political traction—if they move from recommendation to requirement—the market will need to recalibrate. Compliance costs that were previously negligible or non-existent suddenly become material factors. Timelines for product deployment stretch out. The competitive landscape shifts to favor companies with the resources to navigate complex regulatory requirements. All of that needs to be priced into investment decisions, and savvy investors are already watching these policy discussions with keen interest.

Looking Ahead: The Political Reality and What Comes Next

So what happens now? That’s the multi-million-dollar question, and the answer depends on factors that go well beyond the technical merits of ARI’s proposal. This recommendation is aimed at the Trump administration, which means it lands in a political environment with its own priorities, pressures, and philosophical leanings. Will an administration generally skeptical of regulation embrace mandatory safety reviews for AI? Or will the national security and public safety arguments carry the day?

There’s also the question of how Congress responds. Major shifts in government procurement policy rarely happen through executive action alone. Lawmakers will want their say, and that means hearings, debates, and all the messy, slow-moving machinery of legislative politics. Some members of Congress have already shown keen interest in AI regulation, while others remain skeptical of adding more red tape to an industry they see as vital to American competitiveness. The public’s overwhelming distrust of tech self-regulation gives reform advocates a powerful talking point, but translating public sentiment into actual policy is never straightforward.

What seems certain is that the conversation about AI safety and government use isn’t going away. Whether ARI’s specific proposal becomes policy or not, the underlying concerns that motivated it—systems advancing faster than oversight can keep pace, vague contractual language creating accountability gaps, public mistrust of industry self-regulation—aren’t going to resolve themselves. One way or another, the government will need to develop more sophisticated approaches to evaluating, procuring, and deploying advanced AI systems. The only real question is whether that happens proactively, through measured policy development like what ARI is proposing, or reactively, after something goes seriously wrong. For everyone involved—from AI developers to government officials to ordinary citizens whose data and tax dollars are in play—getting ahead of the curve seems like the wiser bet.