Anthropic’s Historic Stand Against Pentagon Demands: What It Means for Tech and Crypto

A Defining Moment in AI Governance

In an unprecedented move that sent shockwaves through the technology sector, Anthropic CEO Dario Amodei took a public stand against the United States Department of Defense on Thursday, refusing to buckle under intense pressure from the Pentagon. The confrontation centers on the military’s demand for completely unrestricted access to Anthropic’s advanced AI technology, Claude. With a looming Friday deadline—just hours away at the time of the announcement—the stakes couldn’t be higher for the AI startup valued at an eye-watering $380 billion. The Defense Department has made its position crystal clear: comply with our terms, or face expulsion from the entire US military supply chain. This standoff represents a watershed moment in the relationship between cutting-edge technology companies and government power—it’s the first time a major AI company has publicly refused a direct threat from the US government to surrender control of its technology. The outcome of this confrontation will likely shape how tech companies navigate the increasingly complex terrain between innovation, ethics, and national security obligations for years to come.

The Core of the Conflict: Where Principles Meet Power

The dispute boils down to two specific safeguards that Anthropic has built into Claude—restrictions that the company views as essential ethical boundaries but that the Pentagon considers unacceptable limitations. First, Anthropic prohibits the use of its AI for autonomous targeting of enemy combatants, meaning the technology cannot independently decide to kill human beings without direct human oversight. Second, the company bars the use of Claude for mass surveillance of American citizens, a protection designed to preserve civil liberties in an age where AI could make unprecedented invasions of privacy technologically feasible. For Anthropic, these aren’t negotiable business terms—they represent fundamental ethical commitments about how powerful AI should and shouldn’t be deployed in the world. In his blog post explaining the company’s position, Amodei didn’t mince words, calling the Pentagon’s threats “inherently contradictory” because government officials are simultaneously labeling Anthropic as a security risk while also treating Claude as essential to national security. “Regardless, these threats do not change our position: we cannot in good conscience accede to their request,” Amodei wrote, drawing a clear ethical line in the sand. The Pentagon’s “final offer,” which arrived overnight Wednesday, apparently tried to appear conciliatory on the surface while including legal language that would essentially allow military officials to disregard those very safeguards whenever they deemed it necessary—a compromise that was no compromise at all in Anthropic’s view.

How the Situation Escalated to a Public Showdown

The confrontation didn’t explode into the public sphere overnight—it followed a carefully orchestrated escalation by Defense Department officials. On Tuesday, Amodei met face-to-face with Defense Secretary Pete Hegseth in what must have been an extraordinarily tense conversation. During that meeting, Pentagon officials laid out exactly what would happen if Anthropic refused to comply, presenting three escalating consequences that read like a governmental pressure campaign playbook. First, Claude would be immediately removed from all military systems where it’s currently deployed. Second, Anthropic would receive a formal “supply chain risk designation,” a bureaucratic label that would effectively blacklist the company throughout the defense contracting ecosystem, forcing every defense contractor to verify they don’t use Anthropic products anywhere in their operations—a devastating commercial blow. Third, and perhaps most dramatic, the Pentagon threatened to invoke the Defense Production Act of 1950, a Korean War-era law that gives the government legal authority to compel private companies to produce goods or provide services deemed essential to national defense. On Thursday, Defense Department spokesman Sean Parnell made the ultimatum public and set a hard deadline: 5:01 pm Eastern Time on Friday for Anthropic to grant unrestricted access to Claude Gov, or face the consequences. “We will not let ANY company dictate the terms regarding how we make operational decisions,” Parnell declared on X (formerly Twitter), framing the standoff as a matter of governmental authority rather than a negotiation between partners. Interestingly, not everyone in Washington supports the Pentagon’s hardball approach—Republican Senator Thom Tillis publicly questioned the wisdom of conducting this dispute in the media spotlight, asking reporters, “Why in the hell are we having this discussion in public? This is not the way you deal with a strategic vendor.”

What’s Actually at Risk for Anthropic and the AI Industry

The immediate financial exposure for Anthropic is substantial but manageable—a $200 million military contract hangs in the balance. However, the supply chain risk designation carries implications that dwarf that contract value. Such a designation would essentially create a compliance nightmare throughout the entire defense industrial base, as every contractor from aerospace giants to software vendors would need to audit their technology stacks to ensure no Anthropic products appear anywhere in systems connected to defense work. Beyond Anthropic’s specific situation, the competitive landscape in the AI-for-defense market is shifting rapidly, and this standoff could accelerate that realignment dramatically. According to Axios, Elon Musk’s xAI has already signed an agreement allowing its Grok AI system to be used in classified government systems, and notably, xAI accepted the Pentagon’s standard ‘all lawful purposes’ language for classified work—exactly what Anthropic is refusing. Meanwhile, both OpenAI and Google are reportedly accelerating their negotiations to enter the classified AI space, likely watching the Anthropic situation carefully to understand what boundaries they might face. Anthropic had enjoyed first-mover advantage as the only AI company with clearance to work on classified material, but this principled stand could cost the company that competitive position entirely. Amodei defended the decision not just on ethical grounds but on technical ones as well, arguing in his blog post that “frontier AI systems are simply not reliable enough to power fully autonomous weapons” and that without proper human oversight, such systems “cannot be relied upon to exercise the critical judgment that our highly trained, professional troops exhibit every day.” It’s a reminder that sometimes the strongest ethical arguments align with practical technical limitations.

Why the Cryptocurrency Community Should Be Watching Closely

At first glance, a dispute between an AI company and the Defense Department might seem disconnected from cryptocurrency and blockchain technology, but there are several reasons why the crypto community should pay extremely close attention to how this situation unfolds. Most importantly, the Pentagon’s willingness to invoke the Defense Production Act against a technology company establishes a legal and political precedent that could extend far beyond the AI sector. If the government successfully uses this Korean War-era law to compel an AI company to strip away safety restrictions and ethical guardrails on national security grounds, that same legal framework could theoretically be applied to cryptocurrency companies, potentially forcing them to modify privacy features, weaken transaction safeguards, or build in backdoors for government surveillance. The precedent of government coercion overriding a tech company’s architectural decisions about privacy and autonomy is precisely what decentralized cryptocurrency systems were designed to resist. Furthermore, this standoff provides real-world validation for the entire thesis behind decentralized AI development—a growing movement that’s exploring how AI systems might be built and operated on decentralized infrastructure rather than by centralized corporate entities. A centralized AI provider, no matter how principled or well-funded, can be pressured, threatened, and potentially legally compelled to strip away the very guardrails that make their technology trustworthy. Decentralized alternatives, by their very architecture, offer more resilient infrastructure against state coercion because there’s no single corporate entity to threaten, no CEO to haul into meetings with cabinet secretaries, and no board of directors who might override ethical commitments under sufficient governmental pressure.

Broader Implications and What Comes Next

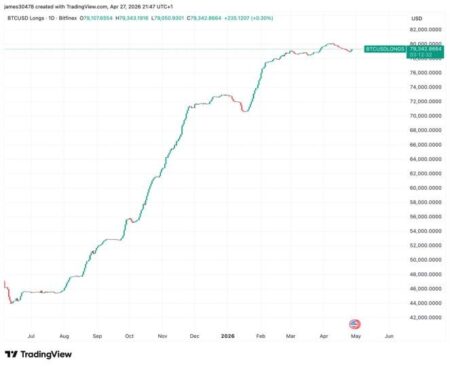

There’s also a more direct connection between Anthropic and the cryptocurrency world that adds another layer of interest to this story. Anthropic’s remarkable $380 billion valuation and its AI-driven disruption of traditional software markets are putting pressure on private credit flows that historically correlate closely with Bitcoin price movements—when capital floods into high-growth AI companies, it can affect the broader risk appetite that influences crypto markets. Additionally, Anthropic has a fascinating historical link to cryptocurrency: FTX’s bankruptcy estate held a significant early stake in the company from Sam Bankman-Fried’s investments, which it later sold to help fund creditor repayments in that spectacular crypto collapse. As the Friday deadline approaches and passes, the real questions begin: Will the Pentagon actually follow through on its threats, or was this public ultimatum partly a negotiating tactic? If they do follow through, how will other AI companies respond—will they stand with Anthropic on principle, or will they quietly accept the Pentagon’s terms to capture the market share Anthropic abandons? And most broadly, what does this mean for every technology company trying to draw and hold a line between accepting lucrative government contracts and maintaining the integrity of their products and principles? The outcome will send signals far beyond the AI industry, influencing how tech companies, crypto projects, and privacy-focused technologies navigate the increasingly difficult balance between commercial success, governmental demands, and the values they claim to represent. Whether this becomes a cautionary tale about the costs of defying governmental power or an inspiring example of a tech company that stood firm on its principles may depend entirely on what happens in the days and weeks following that Friday deadline.