UK Government Cracks Down on Intimate Image Abuse: Tech Giants Face Strict New Rules

Prime Minister Issues Stern Warning to Social Media Platforms

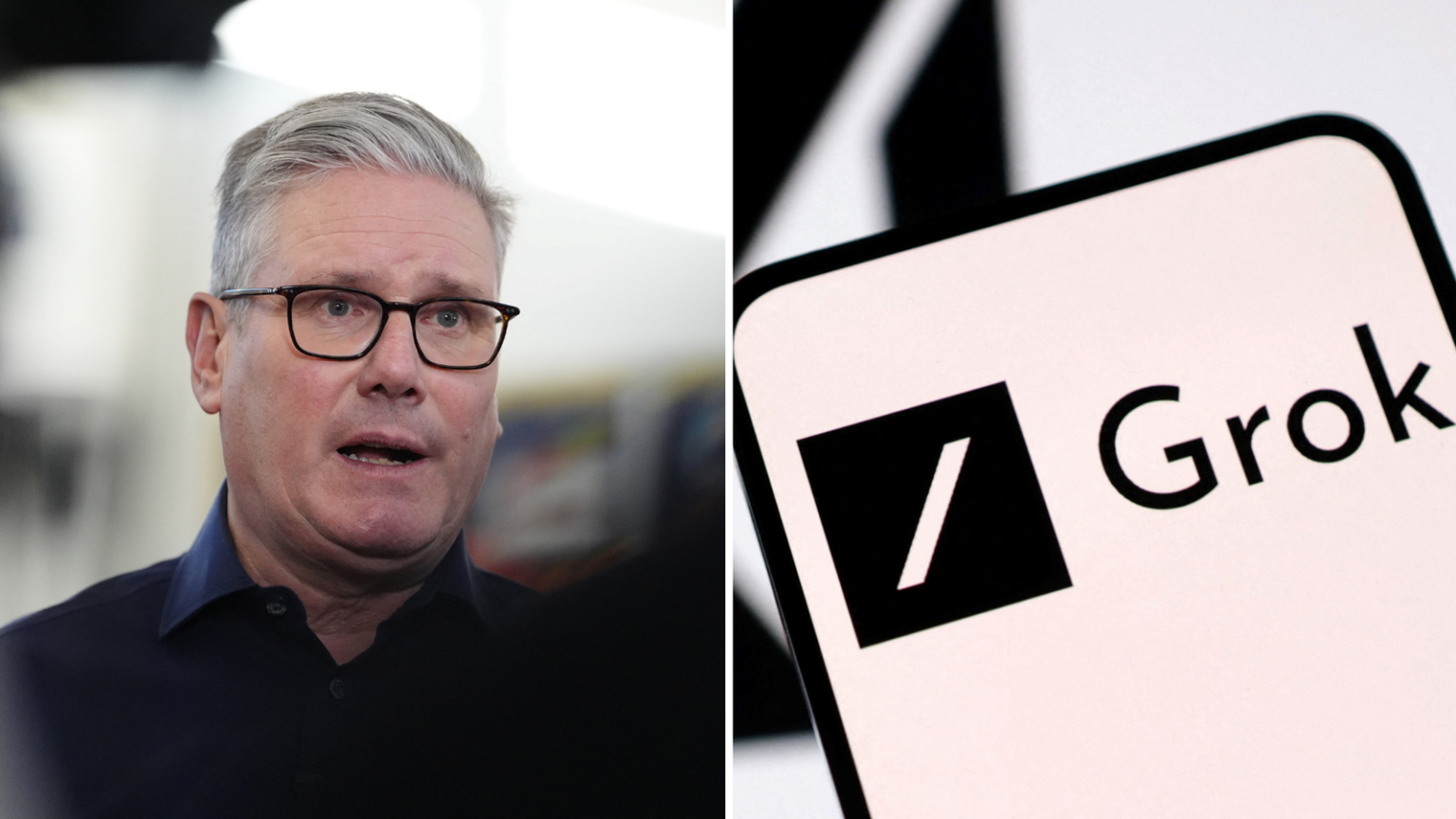

The UK government has announced sweeping new regulations that will fundamentally change how technology companies handle abusive content on their platforms. In a decisive move aimed at protecting women and girls from online harm, Prime Minister Sir Keir Starmer has issued a stern warning to tech giants, declaring that they are officially “on notice.” The new rules specifically target non-consensual intimate images—a growing problem in the digital age that has caused immeasurable harm to countless victims. Under these proposed regulations, technology firms will face serious consequences if they fail to act swiftly when abusive content is reported. The government’s message is clear: the era of tech companies operating with minimal accountability is coming to an end. Sir Keir has emphasized that his administration will “leave no stone unturned” in the fight to protect vulnerable individuals from violence and abuse in the online sphere. This announcement represents a significant shift in how the UK approaches online safety, placing the responsibility squarely on the shoulders of the platforms where harmful content spreads.

The 48-Hour Rule and Severe Financial Penalties

At the heart of these new regulations is a straightforward but powerful requirement: companies must remove non-consensual intimate images within 48 hours of them being reported. This timeframe isn’t just a suggestion—it will be a legal obligation that carries substantial consequences for non-compliance. Technology firms that fail to meet this deadline could face staggering fines of up to 10% of their qualifying worldwide revenue. For major platforms like Facebook, Instagram, TikTok, or X (formerly Twitter), this could translate to billions of pounds in penalties. Even more dramatically, companies that persistently fail to comply could have their services completely banned from operating in the UK. This represents one of the most aggressive regulatory stances any government has taken against tech giants. The government is making it abundantly clear that they expect these companies to treat intimate image abuse with the same level of urgency and seriousness as they currently apply to terrorist content or child sexual abuse material. The comparison is deliberate and pointed—ministers are arguing that the harm caused by non-consensual intimate images deserves the same priority as these other serious threats. This new framework is being introduced through an amendment to the Crime and Policing Bill, which is currently making its way through parliament.

Advanced Technology Solutions and Regulatory Innovation

The government’s approach doesn’t stop at simple removal requirements—it’s also embracing technological solutions to prevent repeated abuse. Media regulator Ofcom is exploring innovative plans to treat non-consensual intimate images similarly to how child sexual abuse material is currently handled. This would involve digitally marking these images when they’re first discovered, creating a permanent identifier that follows the content wherever it goes online. The technology would enable automatic detection and removal whenever someone attempts to re-share these images, even on different platforms or under different file names. This approach addresses one of the most distressing aspects of intimate image abuse: the way these images can resurface repeatedly, causing ongoing trauma to victims who thought the content had been removed. Beyond this, the government has committed to publishing comprehensive guidance for internet companies on how to effectively block “rogue websites” that host abusive content but fall outside the reach of the Online Safety Act. These websites, often operating from jurisdictions with lax regulations, have become safe havens for harmful content. The new guidance will equip technology companies with the tools and knowledge they need to tackle these problematic sites, closing loopholes that have previously allowed abuse to continue unchecked.

The Catalyst: AI Tools and Recent Controversies

The timing of these regulations isn’t coincidental—they come in response to several high-profile controversies involving artificial intelligence tools being weaponized to create abusive content. In January, X’s AI tool called Grok came under intense scrutiny when it was discovered that the system could create AI-generated images that digitally “undressed” people without their consent. The backlash was swift and fierce, highlighting how rapidly evolving AI technology is creating new avenues for abuse that existing laws weren’t designed to address. In response to the outcry, X eventually disabled Grok’s ability to create these types of images, but the damage had already been done, and the vulnerability exposed. The government moved quickly to address this emerging threat. Earlier this month, the creation of non-consensual intimate images, including sexually explicit deepfakes, was officially criminalized. Sir Keir noted that his government had already taken “urgent action against chatbots and ‘nudification’ tools,” but emphasized that these new regulations go even further. The UK isn’t alone in confronting this issue—Ireland’s data privacy regulator announced that X faces a European Union privacy investigation specifically over the non-consensual deepfakes created by Grok. These developments illustrate how this is becoming a global concern requiring coordinated international responses.

Political Debate and Criticism of Government Timing

While the regulations have been broadly welcomed by advocates for online safety, they haven’t escaped political controversy. Shadow technology secretary Julia Lopez, representing the opposition Conservative Party, criticized the government for being slow to act. She pointed out that when Baroness Charlotte Owen, a Conservative peer, had previously put forward a similar proposal, the Labour government had failed to take action at that time. Lopez accused the prime minister of “playing catch-up” and suggested that the government was primarily motivated by a desire to “duck a major backbench rebellion” rather than genuine concern for victims. She claimed that despite the prime minister’s “tough rhetoric,” he had arrived late to addressing this critical issue. These criticisms highlight the political sensitivities surrounding online safety regulations and the pressure facing governments to act decisively. However, regardless of the political motivations being questioned, the substance of the regulations represents a significant step forward in holding technology companies accountable. Technology Secretary Liz Kendall firmly defended the measures, declaring that “the days of tech firms having a free pass are over.” She emphasized the human impact of the current system, noting that “no woman should have to chase platform after platform, waiting days for an image to come down.”

Broader Social Media Crackdown and Future Measures

These regulations on non-consensual intimate images are part of a broader governmental effort to reform how social media platforms operate in the UK. Earlier in the week, Sir Keir announced a comprehensive crackdown on social media platforms, including plans to close legal loopholes that have allowed “vile illegal content created by AI” to proliferate. Downing Street has launched a consultation process examining various measures to enhance online safety, particularly for young people. Among the proposals being considered is an Australian-style ban on under-16s using social media platforms—a controversial measure that has sparked intense debate about balancing child protection with personal freedoms and parental rights. The government is ensuring it has the capability to implement such a ban quickly if the consultation process recommends it. This comprehensive approach signals that the UK government views online safety not as a single-issue problem requiring a narrow solution, but as a complex challenge demanding multi-faceted interventions. The focus extends beyond just removing harmful content to questioning the fundamental ways young people interact with social media and whether current norms are acceptable. While critics may question the timing or motivations behind these announcements, the regulations represent a substantial shift in power dynamics between governments and technology companies. For years, tech giants have largely self-regulated, with governments struggling to keep pace with rapid technological change. These new rules demonstrate that governments are no longer willing to accept that arrangement, particularly when it comes to protecting vulnerable individuals from serious harm. Whether these regulations will be effective remains to be seen, but they undoubtedly mark a turning point in how democratic societies approach the governance of online spaces.