The Battle Against Social Media Addiction: Protecting Our Children in the Digital Age

A Wake-Up Call from Medical Experts

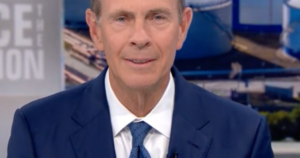

Former U.S. Surgeon General Jerome Adams has issued a stark warning that social media platforms are deliberately engineered to hook young people in much the same way tobacco companies once targeted children with cigarettes. Speaking on CBS’s “Face the Nation,” Adams didn’t mince words about the manipulative tactics employed by tech giants. He emphasized that the mounting evidence connecting social media use among young people to serious mental health issues—including anxiety, depression, and sleep deprivation—can no longer be ignored. This isn’t just about kids spending too much time on their phones; it’s about platforms that are intentionally designed to be addictive, exploiting the developing brains of adolescents for profit. Adams’s comments echo those of current Surgeon General Vivek Murthy, who released a comprehensive report in 2023 calling for immediate government intervention to protect children from what he termed “addictive apps and extreme and inappropriate content” on platforms like Instagram, TikTok, and Snapchat. The comparison to cigarette companies is particularly striking—just as Big Tobacco was eventually held accountable for deliberately targeting young people and hiding the health consequences of their products, Adams and other public health officials are now calling for similar accountability in the tech industry.

Landmark Legal Victories Signal a Turning Point

Two groundbreaking jury verdicts in recent days have sent shockwaves through Silicon Valley and may represent a watershed moment in how we regulate social media companies. In California, a jury found Meta (the parent company of Facebook and Instagram) and YouTube liable for knowingly creating platforms that caused mental health harm to young users while failing to adequately warn about these dangers. The plaintiff, identified only as Kaley G.M., was awarded a total of $6 million—$3 million in compensatory damages to cover the actual harm suffered, and another $3 million in punitive damages intended to punish the companies and deter similar behavior in the future. This wasn’t just a slap on the wrist; it was a clear message that tech companies can and will be held responsible when their products harm children. Meanwhile, in New Mexico, another jury sided with state prosecutors who argued that Meta had violated consumer protection laws related to child exploitation. The jurors determined that these violations numbered in the thousands, resulting in a staggering $375 million penalty. Together, these cases represent the first significant legal accountability for social media companies regarding their impact on youth mental health, potentially opening the floodgates for similar lawsuits across the country.

The Evidence Is Mounting: Social Media’s Toll on Young Minds

The scientific research supporting these legal actions and policy recommendations is becoming increasingly difficult to dismiss. Surgeon General Murthy’s 2023 report compiled extensive evidence showing disturbing correlations between early social media use and a range of mental health problems in young people. Adolescents who spend significant time on these platforms experience higher rates of anxiety and depression compared to their peers with limited exposure. Sleep deprivation is another critical concern—the blue light from screens, the anxiety-inducing content, and the fear of missing out keep young people scrolling late into the night when their developing bodies desperately need rest. This sleep deficit doesn’t just make kids tired; it fundamentally impairs their mental health, academic performance, and even contributes to obesity as tired bodies crave high-calorie foods and lack energy for physical activity. What makes this particularly insidious is that these aren’t accidental side effects of a useful technology—according to evidence presented in recent lawsuits, these platforms were deliberately designed with features that exploit psychological vulnerabilities to maximize user engagement. Features like infinite scroll, algorithmic content feeds that serve increasingly extreme content to maintain attention, and notification systems engineered to create anxiety about missing social interactions are all intentional design choices meant to keep young people hooked.

Tech Companies Defend Their Record While Planning Appeals

Unsurprisingly, Meta and YouTube have pushed back against these verdicts and plan to appeal the decisions. In statements to CBS News, Meta insisted that they “work hard to keep people safe on our platforms and are clear about the challenges of identifying and removing bad actors or harmful content.” The company expressed confidence in what it called its “record of protecting teens online” and vowed to continue defending itself vigorously in court. This response has struck many critics as tone-deaf, given the overwhelming evidence presented in these cases that the companies not only knew about the harmful effects of their platforms but deliberately designed them to be addictive. The tech giants argue that they’ve implemented various safety features, parental controls, and content moderation systems to protect young users. However, critics point out that these measures are often insufficient, difficult to implement effectively, or easily circumvented by determined young users. More fundamentally, they argue that adding safety features as an afterthought doesn’t address the core problem: the platforms’ business models are built on maximizing user engagement, which inherently conflicts with user wellbeing. The companies profit from advertising revenue that increases with screen time, creating a fundamental incentive to keep users—including vulnerable young people—on their platforms as long as possible, regardless of the consequences to their mental health.

Australia Leads the Way: The Case for Age-Based Restrictions

Looking for solutions, Adams pointed to Australia’s pioneering approach as a potential model for the United States. Australia recently became the first country to implement a comprehensive ban prohibiting children under 16 from accessing social media platforms—a bold move that has sparked debate worldwide about how to balance protecting children with respecting digital rights and parental authority. Adams acknowledged that implementing such policies won’t be easy, but argued that the documented harm to children justifies taking strong action. In the United States, momentum is building at the state level, with approximately 25 states either discussing or having already passed legislation aimed at restricting social media and cell phone usage in schools. These school-based restrictions represent a more modest but still significant step toward addressing the problem. By creating phone-free zones during school hours, these policies aim to improve student focus, reduce cyberbullying, and give young people regular breaks from the constant pressure of social media. However, Adams suggests that school restrictions alone may not be sufficient—that more comprehensive age-based restrictions similar to Australia’s approach may be necessary to truly protect children from what he characterizes as “unfettered access to screen time and social media.”

The Road Ahead: Regulation, Education, and Cultural Change

As these court cases continue and states experiment with different regulatory approaches, it’s clear that we’re at a pivotal moment in how society deals with social media’s impact on young people. The comparison to cigarette regulation that Adams draws is instructive—it took decades of mounting evidence, numerous lawsuits, and eventually comprehensive regulation before tobacco use among young people declined significantly. We may be at a similar turning point with social media, where the evidence has finally reached a critical mass that demands action. However, the path forward remains complex and contested. Some argue that government restrictions on social media access infringe on free speech and parental rights, and that education and individual responsibility should be the primary tools for addressing these issues. Others contend that, just as we don’t rely solely on education to protect children from dangerous products, we need regulatory guardrails to protect young people from predatory platform design. The recent jury verdicts suggest that the legal system is increasingly willing to hold tech companies accountable, potentially creating financial incentives for these companies to redesign their platforms with user wellbeing rather than just engagement in mind. Beyond regulation and litigation, there’s also a growing recognition that we need broader cultural change in how we think about technology and childhood. This means having honest conversations with young people about social media’s effects, modeling healthy technology use as adults, and creating spaces and opportunities for in-person connection that can compete with the pull of the screen. As Adams emphasized, understanding the harm that unfettered access to social media is causing our children is the first step toward creating a healthier digital environment for the next generation.